How to be your own robot when wearing augmented reality glasses

Abstract

When imagining future robot life, we’re used to think of humanoid robots mimicking human behavior. But with the upcoming age of the augmented reality wearable, there’s a transition into the opposite direction too: people turning into robot-like beings. With an “always on” HUD (heads up display) a person wearing AR glasses turns into a controllable entity, receiving guidance from the cloud throughout the day in an very directive way. Except when we put it aside, of course. Or when we decide not to wear one. But it’s very likely we’ll eventually start wearing a pair of AR glasses. And while these devices are being presented as tools that will enhance our individual life, they will have an impact on our society as a whole. Let’s look at the reasons why it be so difficult to avoid ending up in the AR ecosystem, and once in, why it will be difficult to keep your own agency.

1.The age of the AR wearable is coming

We are already connected beings, sharing our data with the cloud and following the instructions from our smartphone, but we can theoretically still ignore the influence of the device by not pulling it out of our pocket when it is buzzing or beeping. But in reality, who succeeds in ignoring the advice of an app like Google Maps telling you there’s a “better” route avoiding congestion? With the AR wearables it will even be similar, if not more difficult. The “in-your-face” instructions will be difficult to ignore. But don’t we have the choice of not putting it on our face? May be in the first stage of our use of AR wearables. But do we ever leave home without our smartphone nowadays? Likewise, in the near future we might miss our HUD (heads up display) when we walk around without one. The specific reason why it will become difficult to shut them down or put them aside is because the device will treat us better and better day by day. The better it gets, the more we’ll wear it, which in turn contributes to the accelerated performance improvement loop.

Thinking about the bulky present day AR wearables, it is unimaginable we’ll ever wear them throughout the day, non-stop. They are heavy and their batteries don’t last long. But with the amount of research and effort now being put in developing a light weight, fashionable and comfortable pair of AR glasses, chances are that the technical and practical hurdles of today will vanish. Bulky AR headsets full of ultra-precise tracking hardware might still be the ones we’ll see on factory floors, but in our personal life we’ll be using a different model. Even without detailed 3D tracking there’s plenty of AR functionality that works quite well based on the analysis of what we see and what we encounter, which can be achieved by continuously monitoring the camera feed.

It’s exactly this features that explains why big tech will do anything they can do to have us wear their devices. Imagine Facebook knowing where you are, what you’re looking at and what you like, without being dependent on your thumbs up click. By parsing the data of your Quantified Self sensors and matching that with an analysis of what you see, the big tech companies are getting unparalleled insights into your life. Even the “likes” of other people looking at you or listening to you might be parsed automatically by scanning facial expressions, to enhance your cloud based profile. Of course we’ll still be offered the opportunity to opt-out, but we’ll be lured into accepting extensive monitoring and data processing for the sake of making the quality of service of our device (and the cloud) better and more tailored to our needs.

There’ll be no opt-out for the people around someone wearing AR wearables. That’s a big difference compared to the start of the smartphone era. This time, it’s not just a personal matter to have access to a parallel digital universe. People within your field of vision will automatically be part of the mixed reality environment too. Will there be a need for a “detect-me-not” register? Unfortunately, people trying to stay out of the AR ecosystem might be the ones not willing to register their face into such systems. Trying to stay away from a mixed reality world might become an unattainable challenge. Unknowingly we’ll find ourselves encountering people wearing subtle smart glasses and a contemporary “Computer says no” situation (Fig. 1) will unfold, when people in front of us will say no because they are following the instructions and suggestions from their device.

Fig. 1.

“Computer says no” anno 2030.

2.Our future life

There will be a growing set of use-cases and apps and situations in which our AR wearable will play a role. It might seem that the opportunities to extend our capabilities and to enhance our life are endless by installing app to support us with various tasks and configuring them to our specific needs and wished. For example, when operating new machines or new interfaces we’ll eagerly accept the offered AR guidance. We’ll follow the instructions from our AR way-finding app. Perhaps even inside a shop we’ll agree to see some products blurred to direct our focus to products we actually need based on an analysis of our health data.

Studying patent applications about AR is good a way to take a peek into our future life. You’ll find out how our AR device is going to keep a visual memory of everything we see say and do, so we can easily do historic object based querying and never forget or lose anything. By reading articles, books or watching science fiction movies or series we can preemptively think about dilemmas we’re going to encounter in the future. What if features are on by default? When do we decide to turn them off? When meeting people, would we allow our own face recognition app to take control of the conversation and show in our HUD the right things to say? Would we want to see weekly stats on the amount of people we have met and how many of them seemed to have enjoyed meeting us?

In our future life we will be overwhelmed by configuration choices all the time, because our AR wearable will start intervening in more and more of our activities. It’s an important aspect often ignored in hi-tech showcases, where we often see only a tiny bit of our improved future life, which is configured properly to work well in the most perfect context or situation. In critical science fiction scenarios the focus is most often on one dramatic aspect of our doomed future. But what will our future life be on a regular day to day basis? What can we expect from our AR devices, and what do these devices expect from us? What will be our role? Is there any role for us? What will it be like to live our life as a robot, configuring and extending our behavior with scripts and apps to install “on ourselves”?

3.A hands-on peek into the future

An endless enumeration of conceptual apps and their configuration choices is not a very accessible and pleasant way to get a good feeling of what’s on the horizon for us. Also, each individual will have a personal approach towards innovation, accepting certain technologies and resisting others. To empower proper concrete thinking about our own future, I have created the “Futurotheque” (futurotheque.com). This experimental interactive narrative form is based on a fictional app store that gives a hands-on peek into the future (Fig. 2) through a set of speculative apps. Most apps that might come into existence during the next decade cannot be implemented yet, for various reasons. But what is possible is to present users with speculative configuration options belonging to these conceptual apps, letting them install the apps “for real”. As a result, they’ll get additional requests and messages based on the configured preferences. The fictional apps and the notifications by the fictional Operating System are confronting users with the dialogues we can expect to have with our technology ten years from now, when we’ll be wearing AR glasses and being fully connected to the cloud through apps and scripts. We can think about some of the looming future dilemmas and choices right now, and make up our minds and take a stance towards these developments before they happen, instead of after the facts. Are we ready to wake up in the morning, and specify our preferred level of agency for that day?

Fig. 2.

Screenshot of the “Futurotheque” web-app.

4.Enhance yourself

There will be a booming marketplace for scripts and apps and cloud based applications to support us with certain tasks in life. It’s like how people shopped for virtual clothing and behaviors in the virtual avatar world “Second Life”, where newbies had clumsy movements, but long term residents had the Linden Dollars to buy the scripts to let their avatar become a superb salsa dancer, for example. In the future, we will all become some sort of avatar IRL. Our quality of life will depend on how you’ll program your digital self to interact with the world. And the level of quality you can reach might depend on the brand of the device you’re wearing. A heavy patent war is being fought about the functionality of the AR glasses. As a result, perhaps our future society comes in only a few flavors: what patents will Apple have secured for their users, how will they lead their AR life? And what activities will Facebook or Google users excel in? And what about the wearers of inferior clones?

The divide between people wearing AR glasses and those who are not, is not going to be the only divide we’ll experience in the future. There might be a new digital divide between the “cans” and the “cannots”, based on the capabilities and the features of the AR device someone is wearing and the software running on it. Will people with money have a more meaningful or better (AR) life? Will there be a digital divide along a financial axis? Or will the divide manifest itself along an axis of technical insight? Will our quality of life be dependent on how well we can master our device? Will the devices be open enough to configure ourselves according to our own ideas? Can we choose the augmented world we want to see and experience? Can we program our own scripts to react on specific triggers? And if not, will we gain extra benefits when hacking our devices to enable apps from unauthorized and/or foreign app stores? Perhaps an illegal Chinese face tracking app?

5.Freedom of choice

The hardware suppliers will do their best to avoid unauthorized use, they’ll limit the risks on bad user behavior, so they’ll not launch their devices with an open platform but a thoroughly monitored and controlled portal giving access to verified apps only. They’ll do that to prevent the world from harm, which is indeed a serious threat when AR devices have turned a majority of the population into centrally controlled beings. But foremost, there are business reasons to prevent harmful or meaningless apps to have a spot in the app store. A bad user experiences caused by external software also has a negative effect on the public perception of the hardware and its ecosystem. When looking at the guidelines for the current iOS app store, for example, the criteria that apps need to meet are an indication of the frictionless world we’re about to enter when we see the world through the eyes of big tech. App Store apps need to be exciting, relevant, easy to use, predictable and they should avoid unexpected outcomes. There will be no room for rebellious apps that will criticize the system they’re part of.

But there might be more apps that will not be available on our device. A vendor lock-in is likely to occur because our hardware will come with an app store from the same source. We might have to do without the popular apps from competitive brands. Or they will be available but working unreliably, being pulled from the store constantly as a result of new rules and regulations. Most probably, big tech companies will find their tricks to annoy, create obstacles and to prevent competitors from having apps on their devices. Will Apple allow users of their AR wearables to have a Facebook app that monitors all their activities and sends a continuous video stream to Facebook using 5G? There is going to be a fierce battle between the big tech companies for the total control of our eyeballs. They want to know what we see, and to control what appears in front of our eyes.

What we’ll get to see in the mixed reality around us, will be defined by business models. Even if there seems to be no business model involved, there will eventually be a commercial mechanism underneath. It’s common knowledge that when using software for free, you’re the product. Some companies promise not to mine your data, when you choose to use the paid versions of their software or services. But in the age of AR wearables, the information that can be mined from users will be invaluable, unaffordable. What appears in front of our eyes and what doesn’t show up, will be based on mechanisms in which our human needs and interests are not at the center point. If the AR wearable is going to be a device with such a big impact on our lives and on our society, that is not acceptable. We shouldn’t accept being unaware of the mechanisms inside the black boxes that are influencing us.

6.Algorithms

We need to be able to decide what kind of algorithms define what we see and do, when we turn into robots obeying the instructions appearing in our HUD. For some aspects of our life, we might accept an AI algorithm telling us what to do and what not. But in some situations, we might insist on knowing how and why an algorithm has made its’ decisions to let us do something. Instead of the undecipherable arguments of an AI system we might, theoretically, prefer to have an “IF THIS THEN DO THAT” style approach for certain tasks. Although in practice, it will be too much work to fully program yourself from scratch (ifthistheni.com).

Most of the time machine learning and AI are the only proper ways to organize our digital guidance or assistance. But how to use AI and still be in control? We’ll never get an insight into the algorithms developed by the big tech companies, these are business secrets. We won’t be given the opportunity to use the hardware and replace the algorithms. Not even when regulation would force the big tech companies to modularize their hardware and software so we can shop around and be free to choose the algorithms and engines that will define our life. Don’t expect to see a rise of independent alternative software and hardware providers. They don’t stand a chance. In the intense patent battle for the control of the lucrative Mixed Reality world, promising innovations or start-ups will either be bought and integrated, or be sued and destroyed.

But fortunately, there’s one way out. As an individual, our non-commercial creations will be free of patent claims. So it’s time to take matters into our own hands. We need to get started with DIY AI and avoid being dependent on the collected data and insights Google or Facebook or Apple are gathering about us. We need to get started right now with building up a pile of data about ourselves, work on our own scripts that mine and enrich that data. Only in that way can we work on a proper competitive alternative for the big tech mechanisms that have an influence on our own lives.

And there are various other reasons why our AI-based life will benefit from having us at the steering wheel. For AI to work well, it’s important that our “personal cloud” understands us well enough. Collecting our own data, we can make sure that we’re training our scripts based on the right dataset, obtained during the right occasions. By organizing and controlling our own data collection, we can even arrange to create multiple profiles with the right data. We can distinguish multiple modes of being: we can switch between work mode and our personal life, and we do not just have to hope that Google or Apple will guess our context right. We might even be in incognito mode sometimes, not collecting data at all.

7.Be your own robot

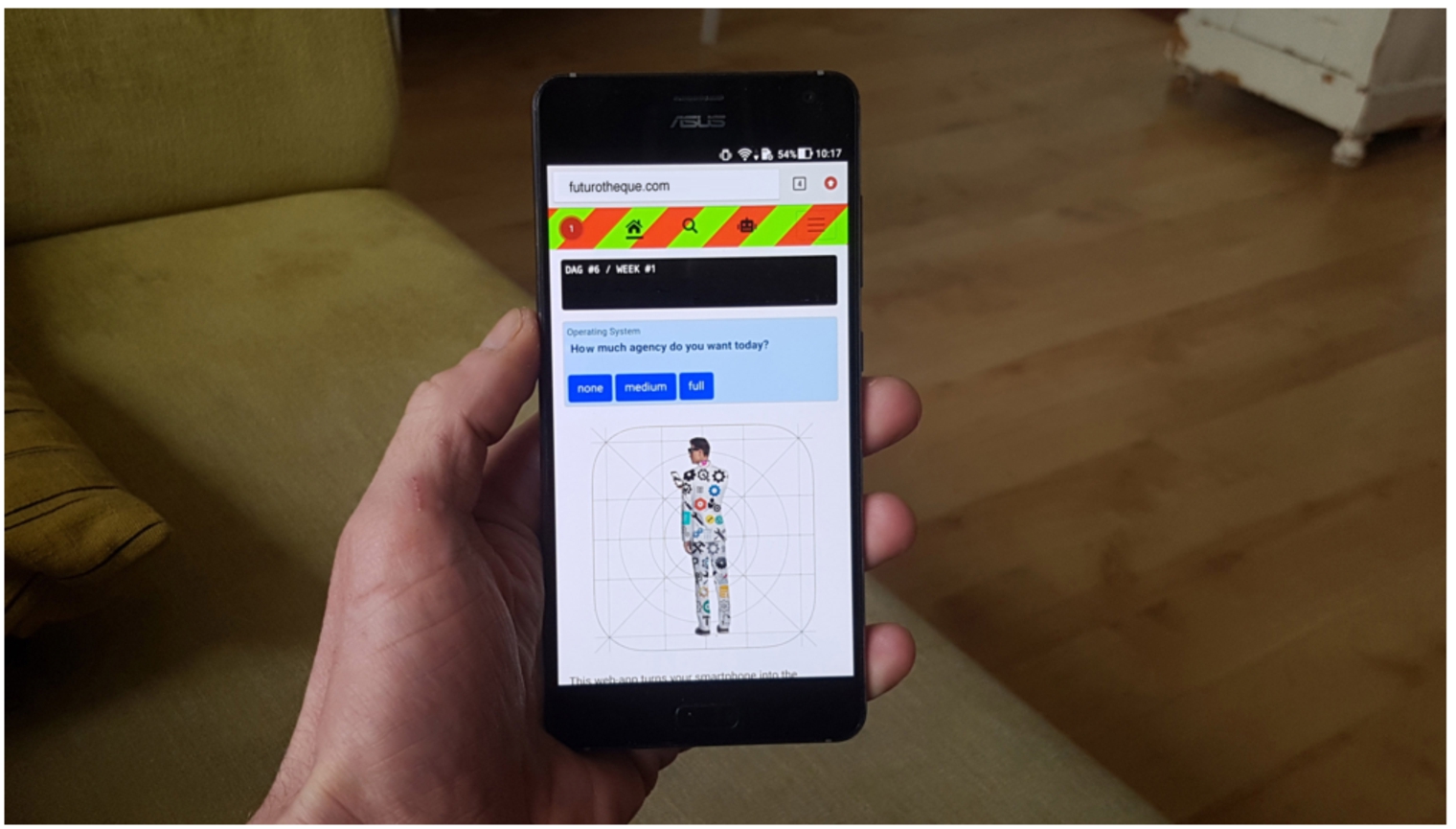

If the gradual transition into an augmented society is going to be unavoidable, a number of things need to be fixed. We shouldn’t accept having only partial control on the devices that are controlling us. We want all the apps. We want all the configuration options. We even want the freedom to pick our preferred algorithms, or program our own scripts.

The task of managing our digital self will be an ICT project on a scale that many of us will not be willing or capable of carrying out. We can’t expect everyone to become an AI expert. So collaboration and sharing scripts with a community will be the only way to achieve this. There’s only one slight negative effect if we’ll all be sharing and using the same smart scripts. We’ll turn into interchangeable robots. (Fig. 3) So in whatever way we’ll enter our future AR society, it will be a challenge to be your own robot.

Fig. 3.

Personal configuration.

References

1 | |

2 |